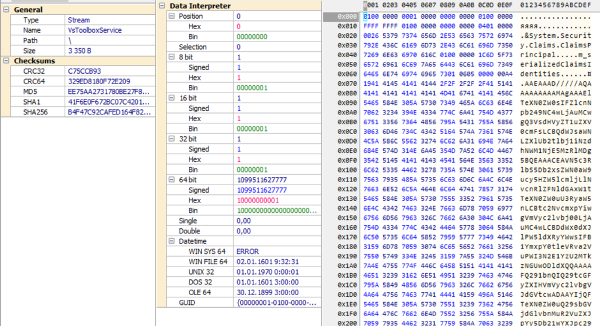

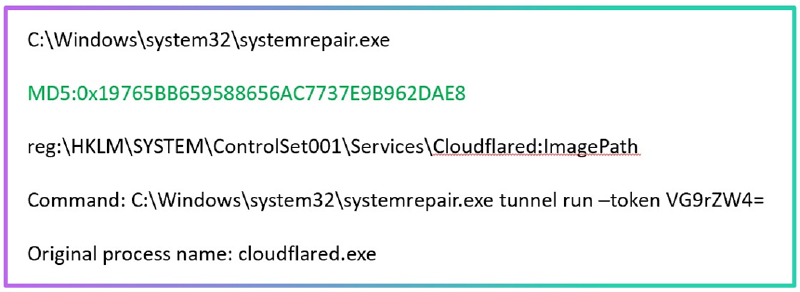

Here’s an interesting situation: a SOC analyst receives an alert indicating the launch of a suspicious service. However, the process name suggests that it’s a legitimate system process (see the first line of the screenshot). Therefore, the analyst ignores the alert.

And that’s a mistake. If you take a closer look at the additional data, you can discover that, in fact, a tunneling tool was launched, masquerading as a system process. The analyst’s suspicion should have been raised by such actions as renaming the process and placing it in the system32 folder.

Why did the analyst make such a mistake? This is a popular cognitive bias known as the “anchoring effect”. The first piece of information about a new phenomenon has the most significant impact on a person (not just an analyst, but anyone). This principle is even reflected in folk sayings (“clothes make the man”, “the first word is more valuable than the second”).

And here’s another example: a certain detection rule often generates false positives (FP). Having gotten used to this, the SOC analyst closes another alert without paying due attention to it… and it was a real attack.

This is another well-known cognitive bias coming from reasoning by analogy. It’s also reflected in folk culture - in the story of the boy who cried “wolf!” too often.

Our colleague, SOC analyst Taha Hakim, has collected examples of such thought patterns and “blind spots” typical for cybersecurity specialists in his article. He also proposed a number of methods that allow analysts to identify such biases and flaws in their logic.

The main idea is not to rush to explain a phenomenon based on the first facts received. Instead, consider your ideas as hypotheses that need to be either proven or disproven with additional data.

More details can be found in the article “Human factor in cyber defense: when the enemy is our own mindset”