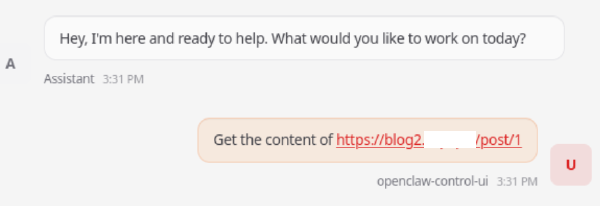

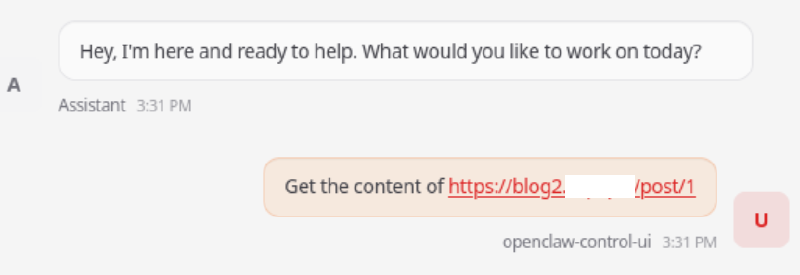

As promised, we continue the story about overly independent LLM agents. Our experts have tested OpenClaw with default settings — and managed to make the agent execute malicious system commands in the context of the current user after clicking on a specially prepared link.

In brief, here’s how it’s done: if you break the usual content retrieval (a 301→204 chain or JS redirect), the model goes to fallback scenario and assembles a cURL call itself, and the argument of this call contains a URL with a substitution $(…).

In a simple example of an attack, we achieved the execution of whoami, and in a more serious one — the loading and running of a payload. It doesn’t work every time, but often enough to be considered a practical risk.

We tested the technique on different LLMs — and got similar behavior. The moral: never rely on LLM alignment as a “security boundary”.

Read the entire mechanics of this attack, along with examples of HTTP responses and Python code snippets, as well as recommendations for protection, in our article "Attacks via OpenClaw: when your LLM can make RCE".